-

Memcached vs. RedisDB/InMemory 2019. 9. 29. 13:54

1. Overview

Both tools are powerful, fast, in-memory data stores that are useful as a cache. Both can help speed up your application by caching database results, HTML fragments, or anything else that might be expensive to generate. But Redis is more powerful, more popular, and better supported than Memcached. Memcached can only do a small fraction of the things Redis can do. Redis is better even where their features overlap.

2. Description

2.1 Memcached

Memcached is a simple volatile cache server. It allows you to store key/value pairs where the value is limited to being a string up to 1MB. It's good at this, but that's all it does. You can access those values by their key at extremely high speed, often saturating available network or even memory bandwidth. When you restart Memcached your data is gone. This is fine for a cache. You shouldn't store anything important there. If you need high performance or high availability there are 3rd party tools, products, and services available.

2.2 Comparison Features of two in-memory data stores

Feature Memcached Redis Sub-millisecond latency(Speed) YES YES Developer ease of use YES YES Data partitioning YES YES Support for a broad set of programming languages YES YES Advanced data structures - YES Multithreaded architecture YES - Snapshots - YES Replication - YES Transactions - YES Pub/Sub - YES Lua scripting - YES Geospatial support - YES Memory usage Redis better DIsk I/O dumping Redis better Scaling Redis better 3. Memory Management Scheme

3.1 Redis

3.1.1 Not all data storage occurs in memory.

When the physical memory is full, Redis may swap values not used for a long time to the disk. Redis only caches all the key information. If it discovers that the memory usage exceeds the threshold value, it will trigger the swap operation. Redis calculates the values for the keys to be swapped to the disk-based on

swappability = age*log(size_in_memory)

It then makes these values for the keys persistent into the disk and erases them from memory. This feature enables Redis to maintain data of a size bigger than its machine memory capacity. The machine memory must keep all the keys and it will not swap all the data.

At the same time, when Redis swaps the in-memory data to the disk, the main thread that provides services, and the child thread for the swap operation will share this part of memory. So, if you update the data you intend to swap, Redis will block this operation, preventing the execution of such a change until the child thread completes the swap operation. When you read data from Redis, if the value of the read key is not in the memory, Redis needs to load the corresponding data from the swap file and then return it to the requester. Here, there is a problem with the I/O thread pool. By default, Redis will encounter congestion, that is, it will respond only after successful loading of all the swap files. This policy is suitable for batch operations when there are a small number of clients. But if you apply Redis in a large website program, it is not capable of meeting the high concurrency demands. However, you can set the I/O thread pool size for Redis running, and perform concurrent operations for reading requests for loading the corresponding data in the swap file to shorten the congestion time.

4. Self-designed Memory Management Mechanisms

For memory-based database systems like Redis and Memcached, memory management efficiency is a key factor influencing the system’s performance. In traditional C language, malloc/free functions are the most common method for distributing and releasing memory. However, this method harbors a huge defect: first, for developers, unmatched malloc and free will easily cause memory leakage; second, the frequent calls will make it difficult to recycle and reuse a lot of memory fragments, reducing the memory utilization; and at last, the system calls will consume a far larger system overhead than the general function calls. Therefore, to improve memory management efficiency, memory management solutions will not use the malloc/free calls directly. Both Redis and Memcached adopt their self-designed memory management mechanisms, but the implementation methods vary a lot.

4.1 Redis

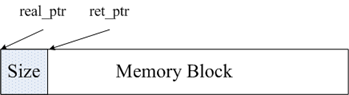

Implementation of Redis’ memory management mainly proceeds through the two files zmalloc.h and zmalloc.c in the source code. To facilitate memory management, Redis will store the memory size in the memory block header following memory allocation. As shown in the figure, the real_ptr is the pointer returned after Redis calls malloc. Redis stores the memory block size in the header and the memory occupied by the size is determinable, that is, the system returns the length of the size_t type, and then the ret_ptr. When the need to release memory arises, the system passes ret_ptr to the memory management program. Through the ret_ptr, the program can easily calculate the value of real_ptr and then pass real_ptr to release the memory.

Redis records the distribution of all the memory by defining an array the length of which is ZMALLOC_MAX_ALLOC_STAT. Every element in the array represents the number of memory blocks allocated by the current program and the size of the memory block is the subscript of the element. In the source code, this array is zmalloc_allocations. The zmalloc_allocations[16] represent the number of memory blocks allocated with a length of 16 bytes. The zmalloc.c contains a static variable of used_memory to record the total size of the currently allocated memory. So in general, Redis adopts the encapsulated malloc/free, which is much simpler compared with the memory management mechanism of Memcached.

4.2 Memcached

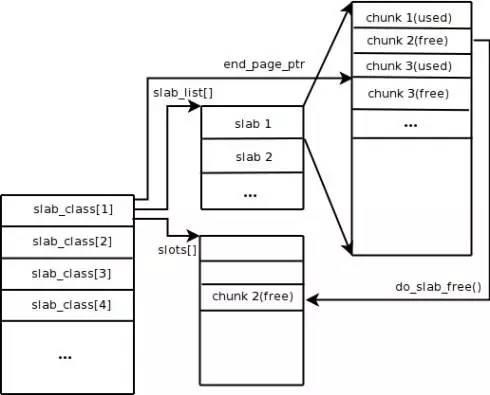

Memcached uses the Slab Allocation mechanism for memory management by default. Its main philosophy is to segment the allocated memory into chunks of a predefined specific length to store the key-value data records of the corresponding length to solve the memory fragment problem completely. Ideally, the slab’s design of the allocation mechanism should guarantee external data storage, that is to say, it facilitates the storage of all the key-value data in the Slab Allocation system. However, the application of other memory requests of Memcached occurs via general malloc/free calls. Normally, this is because the number and frequency of these requests determine that they will not affect the overall system performance. The principle of Slab Allocation is very simple. As shown in the figure, it first applies for a bulk of memory from the operating system and segments it into chunks of various sizes, and then groups chunks of the same size into the Slab Class. Among them, the chunk is the smallest unit for storing the key-value data. It is possible to control the size of each Slab Class by making a Growth Factor at the Memcached startup. Suppose the Growth Factor in the figure is 1.25. If the chunk in the first group is 88 bytes in size, the chunk in the second group will be 112 bytes. The remaining chunks follow the same rule.

When Memcached receives the data sent from the client, it will first select the most appropriate Slab Class according to the data size, and then query the idle chunk list containing the Slab Class in the Memcached to locate a chunk for storing the data. When a piece of data expires or is obsolete, and therefore discarded, it is possible to recycle the chunk originally occupied by the record and restore it to the idle list.

From the above process, we can see that Memcached has very high memory management efficiency that will not cause memory fragments. Its biggest defect, however, is that it may cause space waste. Because the system allocates every chunk in the memory space of a specific length, longer data might fail to utilize the space fully. As shown in the figure, when we cache data of 100 bytes into a chunk of 128 bytes, the unused 28 bytes go to waste.

5. Data Persistence

Redis supports memory data persistence and provides two major persistence policies, RDB snapshot, and AOF log. Memcached does not support data persistence operations. For general business requirements, we suggest you use RDB for persistence because the RDB overhead is much lower than that of AOF logs. For applications that cannot stand the risk of any data loss, we recommend you use AOF logs.

5.1 RDB Snapshot

Redis supports the storage of the snapshot of the current data into a data file for persistence, that is, the RDB snapshot. But how can we generate the snapshot for a database with continuous data writes? Redis utilizes the copy on write mechanism of the fork command. Upon creation of a snapshot, the current process forks a subprocess that makes all the data cyclic and writes them into the RDB file. We can configure the timing of an RDB snapshot generation through the save command of Redis. For example, if you want to configure snapshot generation for once every 10 minutes, you can configure snapshot generation after each 1,000 writes. You can also configure multiple rules for implementation together. Definitions of these rules are in the configuration files of the Redis. You can also set the rules using the CONFIG SET command of Redis during the runtime of Redis without restarting Redis.

Redis’ RDB file is, to an extent, incorruptible because it performs its write operations in a new process. Upon the generation of a new RDB file, the Redis-generated subprocess will first write the data into a temporary file, and then rename the temporary file into an RDB file through the atomic rename system call, so that the RDB file is always available whenever Redis suffers a fault. At the same time, the Redis’ RDB file is also a link in the internal implementation of Redis’ master-slave synchronization. However, RDB has its deficiency in that once the database encounters some problem, the data saved in the RDB file maybe not up-to-date, and data is lost during the period from the last RDB file generation to Redis failure. Note that for some businesses, this is tolerable.

5.2 AOF log

The full form of AOF log is Append Only File. It is an appended log file. Unlike the binlog of general databases, the AOF is a recognizable plaintext and its content is the Redis standard commands. Redis will only append the commands that will cause data changes to the AOF. Every command for changing data will generate a log. The AOF file will become larger and larger. Redis provides another feature - AOF rewrite. The function of AOF rewrite is to re-generate an AOF file. There is only one operation for each record in the new AOF file, instead of multiple operations for the same value recorded in the old copy. The generation process is similar to the RDB snapshot, namely forking a process, traversing the data, and writing data into the new temporary AOF file. When writing data into the new file, it will write all the write operation logs to the old AOF file and record them in the memory buffering zone at the same time. Upon completing the operation, the system will write all the logs in the buffering zone to the temporary file at one time. Thereafter, it will call the atomic rename command to replace the old AOF file with the new AOF file.

AOF is a write file operation and aims to write the operation logs to the disk. It also involves the write operation procedure we mentioned earlier. After Redis calls the write operation for AOF, it uses the appendfsync option to control the time for writing the data to the disk by calling the fsync command. The three settings options in the appendfsync below have security strength from low to strong.

5.2.1 appendfsync no

when we set the appendfsync to no, Redis will not take the initiative to call fsync to synchronize the AOF logs to the disk. The synchronization will be fully dependent on the operating system debugging. Most Linux operating systems perform the fsync operation once every 30 seconds to write the data in the buffering zone to the disk.

5.2.2 appendfsync everysec

when we set appendfsync to everysec, Redis will call fsync once every other second by default to write data in the buffering zone to the disk. But when an fsync call lasts for more than 1 second, Redis will adopt fsync delay to wait for another second. That is, Redis will call the fsync after two seconds. It will perform this fsync no matter how long it takes to execute. At this time, because the file descriptor will experience congestion during the file fsync, the current write operation will experience similar congestion. The conclusion is that in a vast majority of cases, Redis will perform fsync once every other second. In the worst cases, it will perform the fsync operation once every two seconds. Most database systems refer to this operation as group commit, that is, combing the data of multiple writes and write the logs to the disk at a time.

5.2.3 appednfsync always

when we set appendfsync to always, every write operation will call fsync once. At this time, the data is the most secure. Of course, since it performs fsync every time, it will compromise the performance.

6. Cluster Management

Compared with Memcached which can only achieve distributed storage on the client-side, Redis prefers to build distributed storage on the server-side. The latest version of Redis supports distributed storage. Redis Cluster is an advanced version of Redis that achieves distributed storage and allows Single Point of Failure(SPOF). It has no central node and is capable of linear expansion. The figure below provides the distributed storage architecture of Redis Cluster. The inter-node communication follows the binary protocol but the node-client communication follows the ASCII protocol. In the data placement policy, Redis Cluster divides the entire key numerical range into 4,096 hash slots and allows the storage of one or more hash slots on each node. That is to say, the current Redis Cluster supports a maximum of 4,096 nodes. The distributed algorithm that Redis Cluster uses is also simple: crc16 (key) % HASH_SLOTS_NUMBER.

Redis Cluster introduces the master node and slave node to ensure data availability in the case of SPOF. Every master node in Redis Cluster has two corresponding slave nodes for redundancy. As a result, any two failed nodes in the whole cluster will not impair data availability. When the master node exists, the cluster will automatically choose a slave node to become the new master node.

Memcached is a full-memory data buffering system. Although Redis supports data persistence, the full-memory is the essence of its high performance. For a memory-based store, the size of the memory of the physical machine is the maximum data storing capacity of the system. If the data size you want to handle surpasses the physical memory size of a single machine, you need to build distributed clusters to expand the storage capacity.

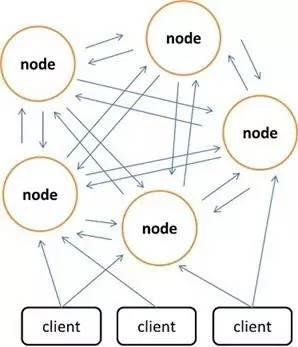

Memcached itself does not support distributed mode. You can only achieve the distributed storage of Memcached on the client-side through distributed algorithms such as Consistent Hash. The figure above demonstrates the distributed storage implementation schema of Memcached. Before the client side sends data to the Memcached cluster, it first calculates the target node of the data through the nested distributed algorithm that in turn directly sends the data to the node for storage. But when the client-side query the data, it also needs to calculate the node that serves as the location of the data queried, and then send the query request to the node directly to get the data.

7. Data Types Supported

7.1 Memcached

Memcached only supports data records of the simple key-value structure

7.2 Redis

Unlike Memcached which only supports data records of the simple key-value structure, Redis supports much richer data types, including String, Hash, List, Set, and Sorted Set. Redis uses a redisObject internally to represent all the keys and values. The primary information of the redisObject is as shown below:

The type represents the data type of a value object. The encoding indicates the storage method of different data types in the Redis, such as type=string represents that the value stores a general string, and the corresponding encoding may be raw or int. If it is int, Redis stores and represents the associated string as a value type. Of course, the premise is that it is possible to represent the string by a value, such as strings of “123″ and “456”. Only upon enabling the Redis virtual memory feature will it allocate the vm fields with memory. This feature is off by default. Now let us discuss some data types.

8. References

https://www.alibabacloud.com/blog/redis-vs-memcached-in-memory-data-storage-systems_592091

https://aws.amazon.com/ko/elasticache/redis-vs-memcached/

https://stackoverflow.com/questions/10558465/memcached-vs-redis

https://www.linkedin.com/pulse/memcached-vs-redis-which-one-pick-ranjeet-vimal/

'DB > InMemory' 카테고리의 다른 글

Buffer vs. cache (0) 2019.09.29 Cache, Write-through, and Write-back (0) 2019.09.29 Remote Dictionary Server(Redis) and Redis Enterprise Cluster (0) 2019.08.25